Bug #4723

closedCan't forward UDP fragmented packets with scrubbing enabled.

0%

Description

I have a use case where I couldn't forward UDP fragmented packets thru a site to site OpenVPN tunnel. The issue isn't linked to OpenVPN itself but solely to the fact that scrubbing is applied on outgoing traffic.

Here's what happen:

1) Fragments are entering LAN interface

2) Scrub applies and fragments are reassembled

3) The firewall can process the reassembled packet

4) The kernel fragments the packet again so it can escape thru any other interface... (vpn, opt, wan..etc..)

5) The scrub out reassemble the packet

6) The packet is too big to escape the interface so it is dropped.

I managed to fix this bug by replacing "scrub on" by "scrub in on" in /etc/inc/filter.inc. Anyway, is there a need (beside random-id) to do scrubbing for outgoing traffic? Maybe it could be possible to disable scrub out when it's not TCP? Any other idea?

I think this bug wasn't present on 2.0.0.

Thank you!

Files

Updated by Dominic Blais almost 11 years ago

Updated by Dominic Blais almost 11 years ago

Just thought that random-id will apply to all packets incoming another interface (LAN..etc..) prior to exit WAN. So, if there's no need to randomize PFSense's own packets "scrub in on" could be used...

Updated by JD - almost 11 years ago

Updated by JD - almost 11 years ago

I can somewhat confirm this with the following scenario:

- Central Office

- OVPN Server (TCP, AES-256-CBC, LZO, PSK, IF:ovpns2)

- SNMP management console in connected LAN/DMZ

- Version 2.2.2

- Office Branch

- OVPN Client

- SNMP agent (listening on 161/udp)

- Version 2.1.5

Connecting to the WebUI, ssh or any TCP related services on the branch firewall from a LAN connected to the central office works just fine.

However, when the SNMP management console (or any host within a connected LAN) tries to connect to the SNMP agent via 161/udp packets seem to get scrubbed when returning from the branch firewall through the ovpn interface (ovpns2):

[2.2.2-RELEASE][root@central]/root: tcpdump -nettti pflog0 host X.X.X.X tcpdump: WARNING: pflog0: no IPv4 address assigned tcpdump: verbose output suppressed, use -v or -vv for full protocol decode listening on pflog0, link-type PFLOG (OpenBSD pflog file), capture size 65535 bytes 00:00:28.658768 rule 9..16777216/0(match): block in on ovpns2: IP0 bad-len 0 00:00:01.003378 rule 9..16777216/0(match): block in on ovpns2: IP14 bad-len 0 00:00:00.999763 rule 9..16777216/0(match): block in on ovpns2: IP9 bad-len 0

The ovpns2 interfaces is assigned its own logical OPT interface. Now, when creating a firewall rule on that interface explicitly allowing return traffic to pass from the SNMP agent ip address to the SNMP management console everything works as expected.

Updated by Phillip Davis almost 11 years ago

Updated by Phillip Davis almost 11 years ago

- File VM-network.png VM-network.png added

Maybe my situation is also related to this in some way. We do not get big ping (or I guess other big packets) from branch office LAN to Main office LAN across an OpenVPN site-to-site link. I posted recently in the forum:

https://forum.pfsense.org/index.php?topic=96043.0

That post was a bit of a wall of text, so understandably no response :)

I have recreated in a (mostly) VM situation, as per the attached diagram. The pfSense-A VM 10.49.35.37/22 is sitting on our real LAN. Behind that is pfSense-B real VM and behind that my VirtualBox host-only network with my laptop 192.168.56.1 to pfSense-B LAN 192.168.56.2 pfSense-A VM has hybrid NAT and I have told it to NAT 192.168.56.0/24 out on WAN so that I can ping from my laptop 192.168.56.1 through all this to devices on 10.49.32.0/22 and it gets NATed out and the real things like 10.49.32.9 can answer easily.

With pings up to 1472 bytes I get good packets back and forth:

From Client-B 192.168.56.1

ping 10.49.32.9 -l 1472 -t

pfSense-B OpenVPN packet capture:

17:08:08.257718 IP 192.168.56.1 > 10.49.32.9: ICMP echo request, id 1, seq 15517, length 1480

17:08:08.260448 IP 10.49.32.9 > 192.168.56.1: ICMP echo reply, id 1, seq 15517, length 1480

pfSense-A OpenVPN packet capture:

17:08:54.247187 IP 192.168.56.1 > 10.49.32.9: ICMP echo request, id 1, seq 15562, length 1480

17:08:54.248338 IP 10.49.32.9 > 192.168.56.1: ICMP echo reply, id 1, seq 15562, length 1480

pfSense-A front-side packet capture:

17:06:33.947095 IP 10.49.35.37 > 10.49.32.9: ICMP echo request, id 50743, seq 15422, length 1480

17:06:33.948408 IP 10.49.32.9 > 10.49.35.37: ICMP echo reply, id 50743, seq 15422, length 1480

Increase the ping to 1473 bytes and it has to be fragmented. This is the result:

ping 10.49.32.1 -l 1473 -t

pfSense-B OpenVPN packet capture:

17:10:49.271944 IP 192.168.56.1 > 10.49.32.9: ICMP echo request, id 1, seq 15677, length 1480

17:10:49.271966 IP 192.168.56.1 > 10.49.32.9: ip-proto-1

17:10:49.274387 IP 172.16.0.1 > 192.168.56.1: ICMP host 10.49.32.9 unreachable, length 36

pfSense-A OpenVPN packet capture:

17:09:45.039423 IP 192.168.56.1 > 10.49.32.9: ICMP echo request, id 1, seq 15610, length 1480

17:09:45.039486 IP 172.16.0.1 > 192.168.56.1: ICMP host 10.49.32.9 unreachable, length 36

17:09:45.039517 IP 192.168.56.1 > 10.49.32.9: ip-proto-1

pfSense-A front-side packet capture:

17:10:23.128146 IP 10.49.35.37 > 10.49.32.9: ICMP echo request, id 50743, seq 15650, length 1481

2 problems here:

a) pfSense-A OpenVPN sends an "unreachable" response back to 192.168.56.1 - seemingly before it even receives the 2nd fragment to reassemble.

b) pfSense-A front side at least claims to send a 1481 byte payload packet out to 10.49.32.9 - why would it even send anything onwards if it has sent an "unreachable" response? And then an over-size packet has been sent out, I guess because "scrub on WAN fragment reassemble" is in effect.

Maybe if this test environment example is sorted out, then other things will also start working?

I will try some variations with "scrub in on WAN" ...

Updated by Chris Buechler over 10 years ago

Updated by Chris Buechler over 10 years ago

- Description updated (diff)

- Category changed from Unknown to Operating System

- Status changed from New to Confirmed

- Assignee set to Chris Buechler

- Target version set to 2.2.5

- Affected Version changed from 2.2.2 to 2.2.x

I hit this issue with a customer last week. Worked fine after disabling scrub. I have pcaps from their traffic (UBZ-69510) and should be able to replicate.

Updated by Chris Buechler over 10 years ago

Updated by Chris Buechler over 10 years ago

- Target version changed from 2.2.5 to 2.3

Updated by Jim Thompson over 10 years ago

Updated by Jim Thompson over 10 years ago

- Assignee changed from Chris Buechler to Luiz Souza

- Priority changed from High to Normal

Updated by Luiz Souza about 10 years ago

Updated by Luiz Souza about 10 years ago

- Target version changed from 2.3 to 2.3.1

Updated by Chris Buechler about 10 years ago

Updated by Chris Buechler about 10 years ago

- Target version changed from 2.3.1 to 2.3.2

Updated by Chris Buechler almost 10 years ago

Updated by Chris Buechler almost 10 years ago

- Target version changed from 2.3.2 to 2.4.0

Updated by Remko Lodder almost 10 years ago

Updated by Remko Lodder almost 10 years ago

Chris Buechler wrote:

I hit this issue with a customer last week. Worked fine after disabling scrub. I have pcaps from their traffic (UBZ-69510) and should be able to replicate.

I see similiar issues with:

GIF interface over IPSEC (mtu1280), reading the pflog0 output it constantly states 'bad-len', and any packet going out on the internet (mostly TCP btw) return a : ICMP Unreachable notice when the Syn/Ack comes back.

Updated by Dominic Blais over 9 years ago

Updated by Dominic Blais over 9 years ago

Remko Lodder wrote:

Chris Buechler wrote:

I hit this issue with a customer last week. Worked fine after disabling scrub. I have pcaps from their traffic (UBZ-69510) and should be able to replicate.

I see similiar issues with:

GIF interface over IPSEC (mtu1280), reading the pflog0 output it constantly states 'bad-len', and any packet going out on the internet (mostly TCP btw) return a : ICMP Unreachable notice when the Syn/Ack comes back.

I think the fix is quite simple, just replace the two lines in /etc/inc/filter.inc that starts with "scrub on" by "scrub in on". Just add the "in" and everything will be fine for every case... I haven't found a reason to keep it scrubing out..

Updated by Luiz Souza over 9 years ago

Updated by Luiz Souza over 9 years ago

Remko Lodder wrote:

Chris Buechler wrote:

I hit this issue with a customer last week. Worked fine after disabling scrub. I have pcaps from their traffic (UBZ-69510) and should be able to replicate.

I see similiar issues with:

GIF interface over IPSEC (mtu1280), reading the pflog0 output it constantly states 'bad-len', and any packet going out on the internet (mostly TCP btw) return a : ICMP Unreachable notice when the Syn/Ack comes back.

I fixed the issue with pflog0 output (corrupted output in tcpdump - 'bad-len').

That was a different issue, but it is now fixed in 2.4.

Updated by Luiz Souza over 9 years ago

Updated by Luiz Souza over 9 years ago

- Status changed from Confirmed to Feedback

I tested the forwarding of fragmented ICMP and UDP packets and they seem to be working as expected on 2.4.

Could someone else who is affected by this issue confirm that ?

Thanks!

PS: 2.4 is almost reaching beta state, so beware...

Updated by Richard Gate about 9 years ago

Updated by Richard Gate about 9 years ago

Hi, I've hit this problem with UDP packets for RADIUS authentication when using a pfSense IPSec tunnel from an AP doing 802.1X EAP/TLS.

I found that applying the changes to /etc/inc/filter.inc, as described by Dominic Blais (many thanks for that by the way), fixed the problem.

I just wondered if there was an official pfSense patch available for this.

Thanks to all.

Updated by Luiz Souza about 9 years ago

Updated by Luiz Souza about 9 years ago

Richard Gate wrote:

Hi, I've hit this problem with UDP packets for RADIUS authentication when using a pfSense IPSec tunnel from an AP doing 802.1X EAP/TLS.

I found that applying the changes to /etc/inc/filter.inc, as described by Dominic Blais (many thanks for that by the way), fixed the problem.

I just wondered if there was an official pfSense patch available for this.

Thanks to all.

Richard, which pfSense version are you running ?

Updated by Richard Gate about 9 years ago

Updated by Richard Gate about 9 years ago

Luiz Otavio O Souza wrote:

Richard, which pfSense version are you running ?

Latest 2.3.3_1

Updated by ryon m about 9 years ago

Updated by ryon m about 9 years ago

I'm having this issue with 2.4:

- v2.4.0.b.20170429.0121 running on both firewalls

- local pf is virtualized, official virtual appliance upgraded to 2.4

- remote pf is, Netgate SG-8860

OpenVPN tunnel settings (on both sides):

tun-mtu 1500;

fragment 1400;

mssfix;

From a local windows system (10.3.70.40) to remote windows system (10.11.2.15):

TEST #1

ping -l 1500 10.11.2.15

Pinging 10.11.2.15 with 1500 bytes of data:

Request timed out.

Request timed out.

Request timed out.

Request timed out.

Ping statistics for 10.11.2.15:

Packets: Sent = 4, Received = 0, Lost = 4 (100% loss),

TEST #2

ping 10.11.2.15

Pinging 10.11.2.15 with 32 bytes of data:

Reply from 10.11.2.15: bytes=32 time=10ms TTL=126

Reply from 10.11.2.15: bytes=32 time=8ms TTL=126

Reply from 10.11.2.15: bytes=32 time=10ms TTL=126

Reply from 10.11.2.15: bytes=32 time=10ms TTL=126

Ping statistics for 10.11.2.15:

Packets: Sent = 4, Received = 4, Lost = 0 (0% loss),

Approximate round trip times in milli-seconds:

Minimum = 8ms, Maximum = 10ms, Average = 9ms

PCAP (local pf):

23:29:41.289185 AF IPv4 (2), length 1504: (tos 0x0, ttl 127, id 6070, offset 0, flags [+], proto ICMP (1), length 1500)

10.3.70.40 > 10.11.2.15: ICMP echo request, id 1, seq 117, length 1480

23:29:41.289211 AF IPv4 (2), length 52: (tos 0x0, ttl 127, id 6070, offset 1480, flags [none], proto ICMP (1), length 48)

10.3.70.40 > 10.11.2.15: ip-proto-1

23:29:46.051239 AF IPv4 (2), length 1504: (tos 0x0, ttl 127, id 6087, offset 0, flags [+], proto ICMP (1), length 1500)

10.3.70.40 > 10.11.2.15: ICMP echo request, id 1, seq 118, length 1480

23:29:46.051253 AF IPv4 (2), length 52: (tos 0x0, ttl 127, id 6087, offset 1480, flags [none], proto ICMP (1), length 48)

10.3.70.40 > 10.11.2.15: ip-proto-1

23:29:51.051842 AF IPv4 (2), length 1504: (tos 0x0, ttl 127, id 6090, offset 0, flags [+], proto ICMP (1), length 1500)

10.3.70.40 > 10.11.2.15: ICMP echo request, id 1, seq 119, length 1480

23:29:51.051892 AF IPv4 (2), length 52: (tos 0x0, ttl 127, id 6090, offset 1480, flags [none], proto ICMP (1), length 48)

10.3.70.40 > 10.11.2.15: ip-proto-1

23:29:56.051842 AF IPv4 (2), length 1504: (tos 0x0, ttl 127, id 6119, offset 0, flags [+], proto ICMP (1), length 1500)

10.3.70.40 > 10.11.2.15: ICMP echo request, id 1, seq 120, length 1480

23:29:56.051863 AF IPv4 (2), length 52: (tos 0x0, ttl 127, id 6119, offset 1480, flags [none], proto ICMP (1), length 48)

10.3.70.40 > 10.11.2.15: ip-proto-1

23:30:07.883817 AF IPv4 (2), length 64: (tos 0x0, ttl 127, id 6135, offset 0, flags [none], proto ICMP (1), length 60)

10.3.70.40 > 10.11.2.15: ICMP echo request, id 1, seq 121, length 40

23:30:07.893752 AF IPv4 (2), length 64: (tos 0x0, ttl 127, id 14684, offset 0, flags [none], proto ICMP (1), length 60)

10.11.2.15 > 10.3.70.40: ICMP echo reply, id 1, seq 121, length 40

23:30:08.886027 AF IPv4 (2), length 64: (tos 0x0, ttl 127, id 6139, offset 0, flags [none], proto ICMP (1), length 60)

10.3.70.40 > 10.11.2.15: ICMP echo request, id 1, seq 122, length 40

23:30:08.893659 AF IPv4 (2), length 64: (tos 0x0, ttl 127, id 14685, offset 0, flags [none], proto ICMP (1), length 60)

10.11.2.15 > 10.3.70.40: ICMP echo reply, id 1, seq 122, length 40

23:30:09.889025 AF IPv4 (2), length 64: (tos 0x0, ttl 127, id 6142, offset 0, flags [none], proto ICMP (1), length 60)

10.3.70.40 > 10.11.2.15: ICMP echo request, id 1, seq 123, length 40

23:30:09.898638 AF IPv4 (2), length 64: (tos 0x0, ttl 127, id 14686, offset 0, flags [none], proto ICMP (1), length 60)

10.11.2.15 > 10.3.70.40: ICMP echo reply, id 1, seq 123, length 40

23:30:10.892094 AF IPv4 (2), length 64: (tos 0x0, ttl 127, id 6146, offset 0, flags [none], proto ICMP (1), length 60)

10.3.70.40 > 10.11.2.15: ICMP echo request, id 1, seq 124, length 40

23:30:10.901171 AF IPv4 (2), length 64: (tos 0x0, ttl 127, id 14687, offset 0, flags [none], proto ICMP (1), length 60)

10.11.2.15 > 10.3.70.40: ICMP echo reply, id 1, seq 124, length 40

PCAP (remote pf)

23:29:41.290461 AF IPv4 (2), length 1504: (tos 0x0, ttl 127, id 6070, offset 0, flags [+], proto ICMP (1), length 1500)

10.3.70.40 > 10.11.2.15: ICMP echo request, id 1, seq 117, length 1480

23:29:41.290560 AF IPv4 (2), length 52: (tos 0x0, ttl 127, id 6070, offset 1480, flags [none], proto ICMP (1), length 48)

10.3.70.40 > 10.11.2.15: ip-proto-1

23:29:46.051719 AF IPv4 (2), length 1504: (tos 0x0, ttl 127, id 6087, offset 0, flags [+], proto ICMP (1), length 1500)

10.3.70.40 > 10.11.2.15: ICMP echo request, id 1, seq 118, length 1480

23:29:46.051820 AF IPv4 (2), length 52: (tos 0x0, ttl 127, id 6087, offset 1480, flags [none], proto ICMP (1), length 48)

10.3.70.40 > 10.11.2.15: ip-proto-1

23:29:51.053010 AF IPv4 (2), length 1504: (tos 0x0, ttl 127, id 6090, offset 0, flags [+], proto ICMP (1), length 1500)

10.3.70.40 > 10.11.2.15: ICMP echo request, id 1, seq 119, length 1480

23:29:51.053098 AF IPv4 (2), length 52: (tos 0x0, ttl 127, id 6090, offset 1480, flags [none], proto ICMP (1), length 48)

10.3.70.40 > 10.11.2.15: ip-proto-1

23:29:56.053048 AF IPv4 (2), length 1504: (tos 0x0, ttl 127, id 6119, offset 0, flags [+], proto ICMP (1), length 1500)

10.3.70.40 > 10.11.2.15: ICMP echo request, id 1, seq 120, length 1480

23:29:56.053142 AF IPv4 (2), length 52: (tos 0x0, ttl 127, id 6119, offset 1480, flags [none], proto ICMP (1), length 48)

10.3.70.40 > 10.11.2.15: ip-proto-1

23:30:07.884171 AF IPv4 (2), length 64: (tos 0x0, ttl 127, id 6135, offset 0, flags [none], proto ICMP (1), length 60)

10.3.70.40 > 10.11.2.15: ICMP echo request, id 1, seq 121, length 40

23:30:07.885574 AF IPv4 (2), length 64: (tos 0x0, ttl 127, id 14684, offset 0, flags [none], proto ICMP (1), length 60)

10.11.2.15 > 10.3.70.40: ICMP echo reply, id 1, seq 121, length 40

23:30:08.886693 AF IPv4 (2), length 64: (tos 0x0, ttl 127, id 6139, offset 0, flags [none], proto ICMP (1), length 60)

10.3.70.40 > 10.11.2.15: ICMP echo request, id 1, seq 122, length 40

23:30:08.887459 AF IPv4 (2), length 64: (tos 0x0, ttl 127, id 14685, offset 0, flags [none], proto ICMP (1), length 60)

10.11.2.15 > 10.3.70.40: ICMP echo reply, id 1, seq 122, length 40

23:30:09.889196 AF IPv4 (2), length 64: (tos 0x0, ttl 127, id 6142, offset 0, flags [none], proto ICMP (1), length 60)

10.3.70.40 > 10.11.2.15: ICMP echo request, id 1, seq 123, length 40

23:30:09.892332 AF IPv4 (2), length 64: (tos 0x0, ttl 127, id 14686, offset 0, flags [none], proto ICMP (1), length 60)

10.11.2.15 > 10.3.70.40: ICMP echo reply, id 1, seq 123, length 40

23:30:10.893031 AF IPv4 (2), length 64: (tos 0x0, ttl 127, id 6146, offset 0, flags [none], proto ICMP (1), length 60)

10.3.70.40 > 10.11.2.15: ICMP echo request, id 1, seq 124, length 40

23:30:10.894837 AF IPv4 (2), length 64: (tos 0x0, ttl 127, id 14687, offset 0, flags [none], proto ICMP (1), length 60)

10.11.2.15 > 10.3.70.40: ICMP echo reply, id 1, seq 124, length 40

This problem is ultimately impacting my SIP phone system which sends jumbo UDP packets. I've tried numerous OpenVPN settings, and turning off pf scrubbing (via GUI which breaks my system) or via the hacks above (didn't help).

Updated by ryon m about 9 years ago

Updated by ryon m about 9 years ago

Conducted another test:

(From my workstation 10.3.70.40)

ping 10.11.2.15

ping -l 1500 10.11.2.15

(From my virtualized pfSense)

root: tcpdump -i ovpnc1 -nettti pflog0 -vv host 10.3.70.40

tcpdump: listening on pflog0, link-type PFLOG (OpenBSD pflog file), capture size 262144 bytes

00:00:00.000000 rule 362/0(match): pass in on vmx1: (tos 0x0, ttl 128, id 19745, offset 0, flags [none], proto ICMP (1), length 60)

10.3.70.40 > 10.11.2.15: ICMP echo request, id 1, seq 13, length 4000:00:14.649977 rule 362/0(match): pass in on vmx1: (tos 0x0, ttl 128, id 19756, offset 0, flags [none], proto ICMP (1), length 1528, bad cksum 6bb0 (->8b94)!)

10.3.70.40 > 10.11.2.15: ICMP echo request, id 1, seq 17, length 15082 packets captured

96 packets received by filter

0 packets dropped by kernel

I'm not sure why bad cksum is being generated. I've tried enabling "Disable hardware checksum offload" in System -> Advanced -> Networking, rebooted the firewall, I get the same result.

Updated by ryon m over 8 years ago

Updated by ryon m over 8 years ago

I am no longer able to troubleshoot this issue, I switched over to IPSec to resolve my SIP/UPD issue. I was working with Netgate Professional Support on this issue and was able to get it working at one point, but not due to any technical changes, we ended up rebooting several of my firewalls and it just stated working. So, it seems there may still be some intermittent or timing issue, possibly even something with my ISP's.

As far as I'm concerned this issue was resolved.

Maybe the creator of the ticket has an update.

Updated by Constantine Kormashev over 8 years ago

Updated by Constantine Kormashev over 8 years ago

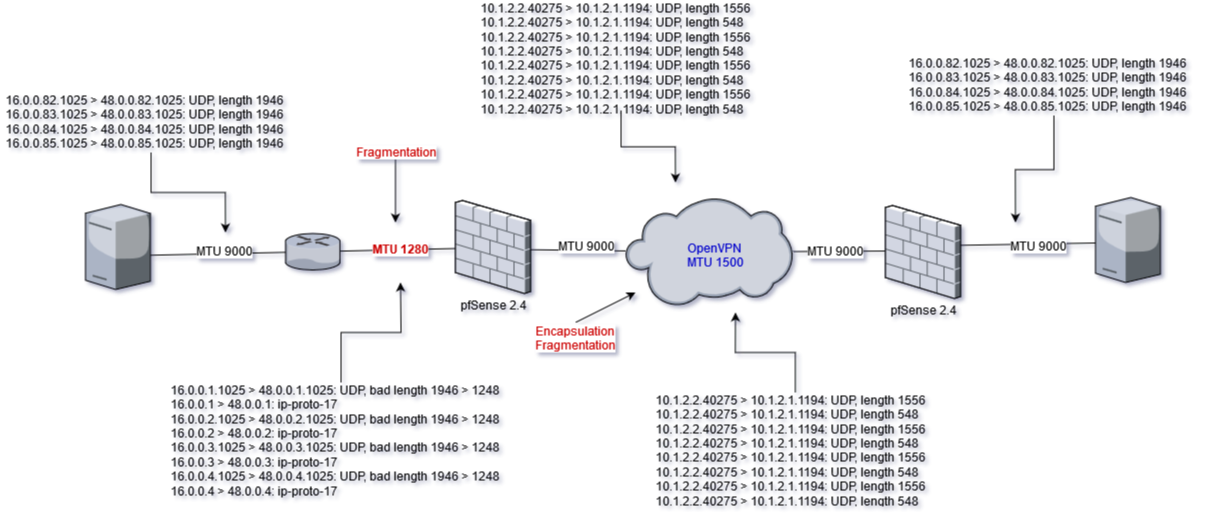

- File diagram.png diagram.png added

I made the lab in order to reproduce the issue. But could not reproduce one.

I tried to use 2KB frames, and the frames were correctly fragmented on all the way. I added small tcpdump output for each of nodes it made possible to see what happened on path between nodes.

Moreover, I experimented with OpenVPN MTU it is possible to escape fragmentation between OpenVPN nodes, just setup proper MTU size with tun-mtu option, but in that case IP MTU between OpenVPN nodes has to be big enough because OpenVPN encapsulation increases packet size on 32B.